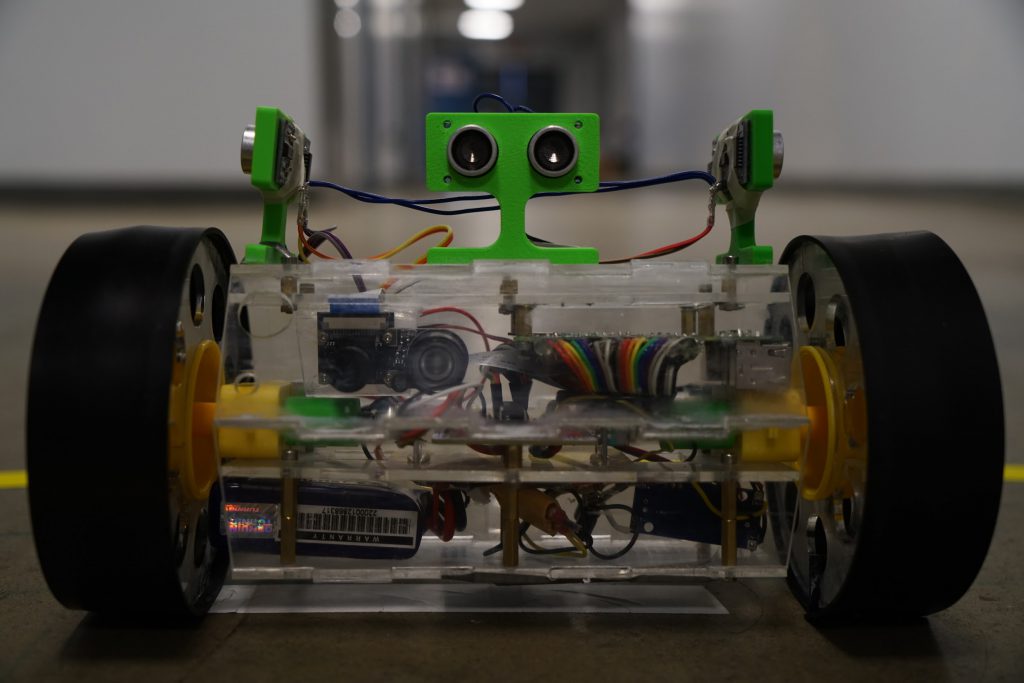

Introducing the Tubular Robot project

The Tubular Robot is a project I and 4 other teammates made in Spring 2018 for class ECE 196: Project in a box. The codes are hosted on Github

The Tubular Robot is a RaspberryPi-powered robot equipped with two encoders and three ultrasonic proximity sensors. The data is streamed wirelessly to the client to make a map of the surroundings as it goes. The encoders on both motors make sure the robot knows precisely where it is at for every timestamp. It has both an autonomous obstacle-avoiding mode (based on the front ultrasonic proximity sensor), and a wirelessly controlled manual driving mode. The robot is also equipped with a night vision camera that streams video wirelessly to the user.

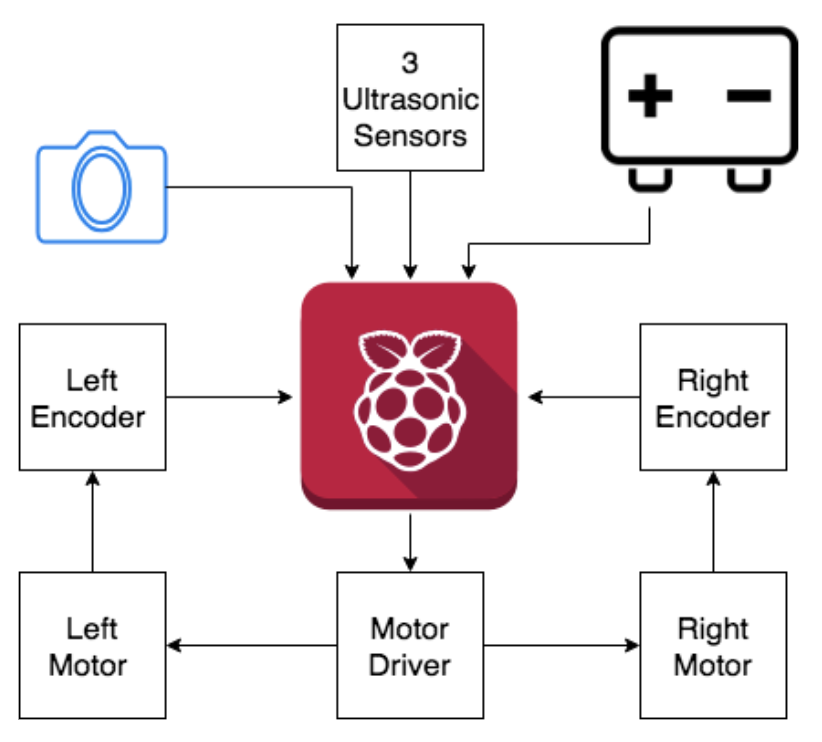

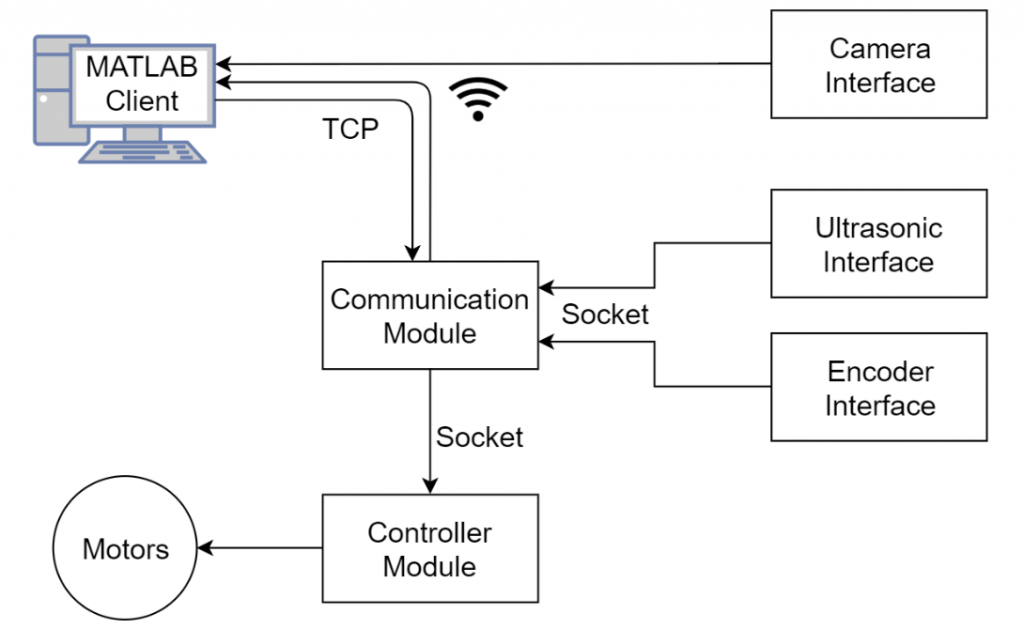

Here is a simple chart demonstrating what has been put together:

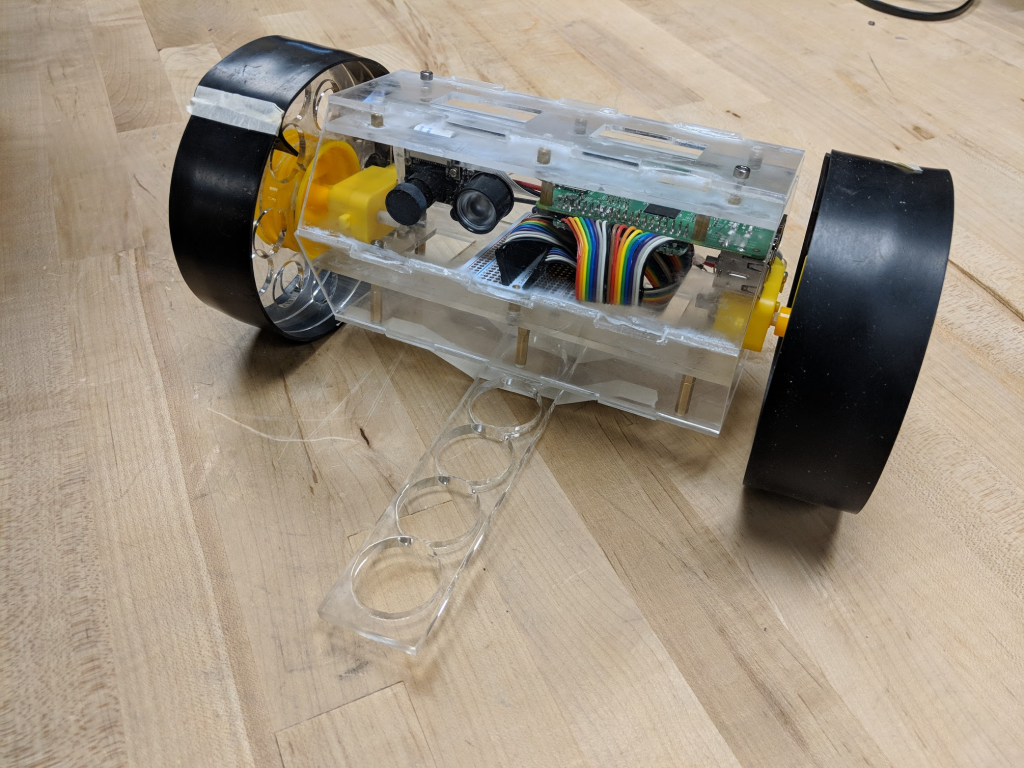

Assembly

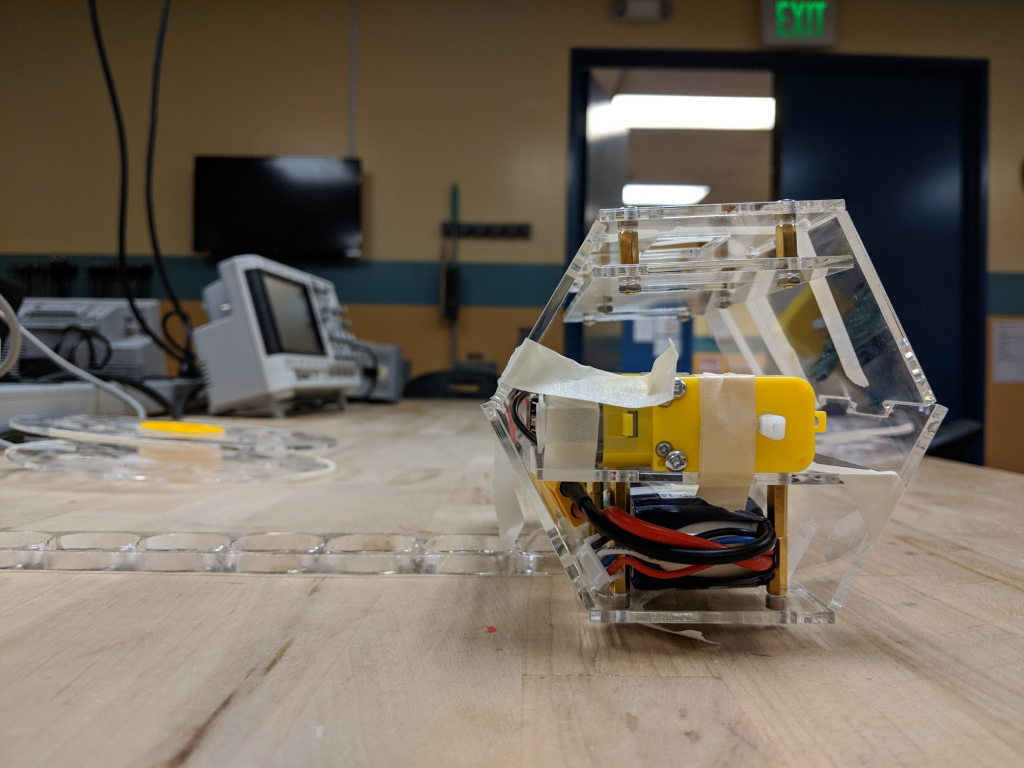

From the picture, you can tell that it’s literally a “tubular” robot. The “tube” is a hexagon tube made by combining six laser-cut acrylic together. The two wheels also consist of 8 laser-cut half-circles glued together and covered with a rubber band.

The Wheels

The “Tubular” Body

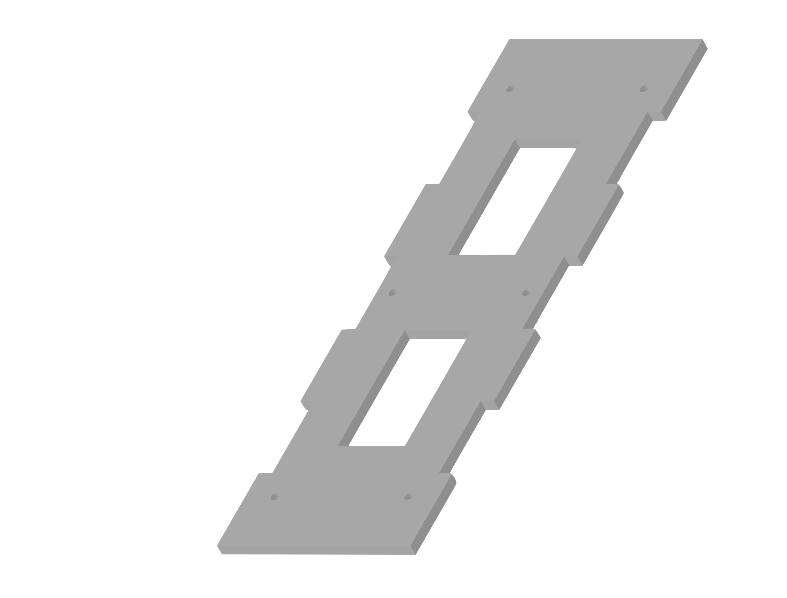

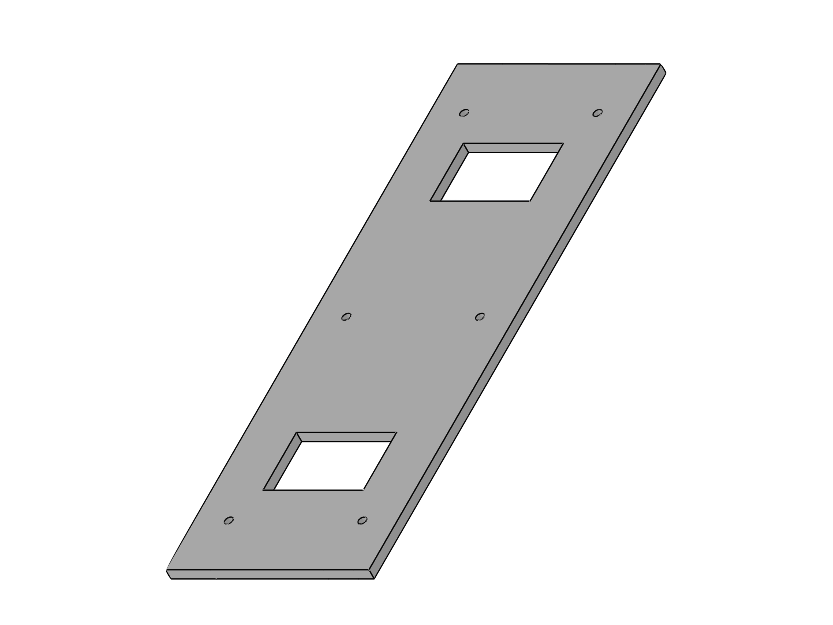

The Middle Platform

There is also a middle platform in the middle of the “tube” in order to separate the battery from all the wires to connect sensors, as well as provide a solid platform to rest our Raspberry Pi and camera.

Software

There are a lot of sensors I/Os including in this project including three ultrasonic proximity sensors, two motor encoders.

As shown in the chart, in order to make all the sensors working together, we utilized the multi-threading feature of Python on Raspberry Pi. We had 5 different software running together doing their own task at the same time. Besides the camera interface, the other 4 are written in Python and communicate with each other through socket. The main control/communication Python module also communicates with the client software running on MATLAB on PC through TCP/IP protocol through Wi-Fi.

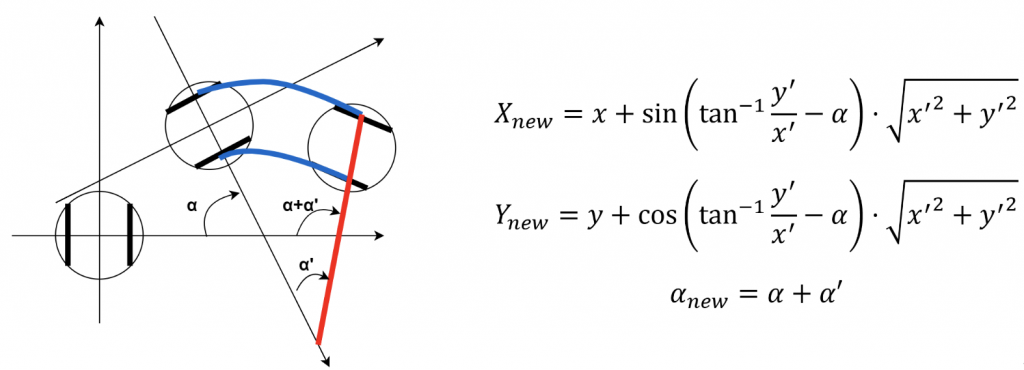

Here is part of the calculations done on MATLAB side to update the location for our robot from the encoder data received.

The codes are hosted on Github. More details will be posted soon!